This book by Steve Grand has been on my list for a while. It's his personal account of how he built a robot at home, with every goal of rivaling some of the most sophisticated research efforts at the time. Steve Grand, by the way, is the mastermind behind the popular Creatures series of artificial life simulation video games. His books were recommended to me several years ago by Twitter buddy Artem (@artydea), whom I unfortunately haven't seen around much recently. Thanks, Artem, if you're still out there.

|

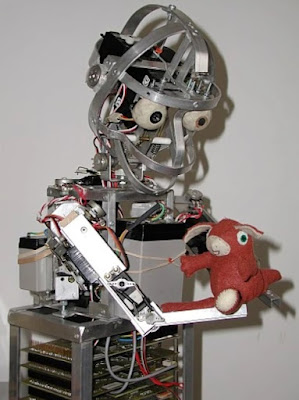

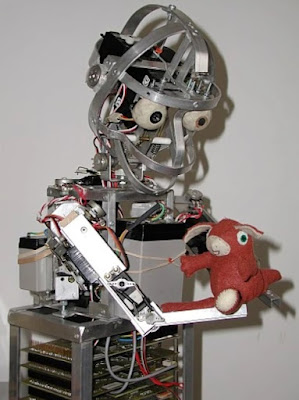

| Lucy, without her fur on. Photo credit: Creatures wikia. |

The magnum opus described in this book is the eponymous Lucy, who resembles an orangutan ... or at least the upper half of one. Lucy has no legs, but does have actuated arms, visual and auditory sensors, and a mouth and speech system. Grand aimed for a holistic approach (rather than focusing on just one function, such as vision) because he wanted to investigate the interactions and possible commonalities among the various sensory modalities and motor systems.

Once Grand started introducing himself, I quickly realized that I was hearing from someone more or less like me: an educated-layman tinkerer, working independently. Grand produced Lucy on his own initiative, without the backing of a university or corporation, though toward the end of the project he received a grant from NESTA (the UK's National Endowment for Science, Technology, and the Arts). But the substance of Grand's work diverges from mine. He put heavy investment into all the sensorimotor aspects of intelligence that I'm completely leaving out of Acuitas. And Lucy's control systems, while not explicitly biomimetic, are very brain-inspired; whereas I draw inspiration from the human mind as an abstraction, and don't care much about our wetware at all. So it was fun to read an account of somebody who was working on roughly the same level as myself, but with different strategies in a different part of the problem space.

On the other hand, I was disappointed by how much of the book was theory rather than results. I got the most enjoyment out of the parts in which Grand described how he made a design decision or solved a problem. His speculations about what certain neuroscience findings might mean, or how he *could* add more functionality in the future, were less interesting to me ... because speculations are a dime a dozen, especially in this field. Now I do want to give Grand a lot of credit: he actually built a robot! That's farther than a lot of pontificators seem to get. But the book is frank about Lucy being very much unfinished at time of publication. Have a look around this blog. If there's a market for writeups of ambitious but incomplete projects, then where's *my* book deal?

In the book, Grand said that Lucy was nearly capable of learning from experience to recognize a banana and point at it with one of her arms. It sounded like she had all the enabling features for this, but wasn't doing it *reliably* yet. I did a little internet browsing to see how much farther Lucy got after the book went to press. From what I could find, her greatest accomplishment was learning the visual difference between bananas and apples, and maybe demonstrating her knowledge by pointing. [1] That's nothing to sneeze at, trust me. But it's a long way from what Grand's ambitions for Lucy seemed to be, and in particular, it leaves his ideas about higher reasoning and language untested. Apparently he did not just get these things "for free" after figuring out some rudimentary sensorimotor intelligence. Grand ceased work on Lucy in 2006, and she is now in the care of the Science Museum Group. [2]

Why did he stop? He ran out of money. Grand worked on Lucy full-time while living off his savings. The book's epilogue describes how NESTA came through just in time to allow the project to continue. Once the grant was expended, Lucy was set aside in favor of paying work. I doubt I can compete with Grand's speed of progress by playing with AI on the side while holding down a full-time job ... but I might have the advantage of sustainability. Grand started in 2001 and gave Lucy about five years. If you don't count the first two rudimentary versions, Acuitas is going on eight.

Grand identifies not neurons, but the repeating groups of neurons in the cortex, as the "fundamental unit" of general intelligence and the ideal level at which to model a brain. He doesn't use the term "cortical column," but I assume that's what he's referring to. Each group contains the same selection of neurons, but the wiring between them is variable and apparently "programmed" by experiential learning, prompting Grand to compare the groups with PLDs (the forerunners of modern FPGAs). He conceptualizes intelligence as a hierarchy of feedback control loops, an idea I've also seen expounded by Filip Piekniewski. [3] It's a framing I rather like, but I still want to be cautious about hanging all of intelligence on a single concept or method. I don't think any lone idea will get you all the way there (just as this one did not get Grand all the way there).

Lucy's body is actuated by electric motors, with linkages that help them behave more like "muscles." Grand didn't try pneumatics or hydraulics, because he thought they would be too difficult to control. I guess we'll see, eh?

Two chapters at the book's tail end move from technical affairs into philosophy. The first addresses safety concerns and fears of killer robots. While I agree with his basic conclusion that AI is not inevitably dangerous, I found his arguments dated and simplistic. I doubt they would convince anybody acquainted with recent existential risk discourse, which probably wasn't in the public consciousness when Grand was building Lucy. (LessWrong.com was launched in 2009; Yudkowski's "Harry Potter and the Methods of Rationality," Scott Alexander's "Slate Star Codex" blog, and Bostrom's Superintelligence all came later. See my "AI Ideology" article series for more about that business.)

The final chapter is for what I'll call the "slippery stuff": consciousness and free will. Grand avoids what I consider the worst offenses AI specialists commit on these topics. He admits that he doesn't really know what consciousness is or what produces it, instead of advancing some untestable personal theory as if it were a certainty. And he doesn't try to make free will easier by redefining it as something that isn't free at all. But I thought his discussion was, once again, kind of shallow. The only firm position he takes on consciousness is to oppose panpsychism, on the grounds that it doesn't really explain anything: positing that consciousness pervades the whole universe gets us no farther toward understanding what's special about living brains. (I agree with him, but there's a lot more to the consciousness discussion.) And he dismisses free will as a logical impossibility, because he apparently can't imagine a third thing that is neither random nor feed-forward deterministic. He doesn't consider that his own imagination might be limited, or dig into the philosophical literature on the topic; he just challenges readers to define self-causation in terms of something else. (But it's normal for certain things to be difficult to define in terms of other things. Some realities are just fundamental.) It's one chapter trying to cover questions that could fill a whole book, so maybe I shouldn't have expected much.

On the whole, it was interesting to study the path walked by a fellow hobbyist and see what he accomplished - and what he didn't. I wonder whether I'll do as well.

Until the next cycle,

Jenny

[1] Dermody, Nick. "A Grand plan for brainy robots." BBC News Online Wales (2004). http://news.bbc.co.uk/2/hi/uk_news/wales/3521852.stm

[2] Science Museum Group. "'Lucy' robot developed by Steve Grand." 2015-477 Science Museum Group Collection Online. Accessed 2 January 2025. https://collection.sciencemuseumgroup.org.uk/objects/co8465358/lucy-robot-developed-by-steve-grand.

[3] Piekniewski, Filip. "The Atom of Intelligence." Piekniewski's Blog (2023). https://blog.piekniewski.info/2023/04/16/the-atom-of-intelligence/